Let’s be honest for a moment.

AI is already in your classroom.

Students are using ChatGPT to brainstorm essays, generate summaries, and even prototype startup ideas. Some educators see this as exciting. Others see it as academic chaos.

And many are quietly asking the same question:

“Are we losing academic integrity?”

The real issue isn’t whether students will use AI.

They will.

The real question is:

How do universities teach students to use AI responsibly while protecting academic integrity?

This is where the ethics of AI in education becomes essential.

For business schools and entrepreneurship programs especially, ignoring AI isn’t an option. The companies students will build and work for will rely heavily on AI systems.

Which means educators must now teach two things at once:

- Business thinking

- Responsible AI use

And yes, that’s a challenge.

But it’s also a massive opportunity.

Table of Contents

What is the ethics of AI in education?

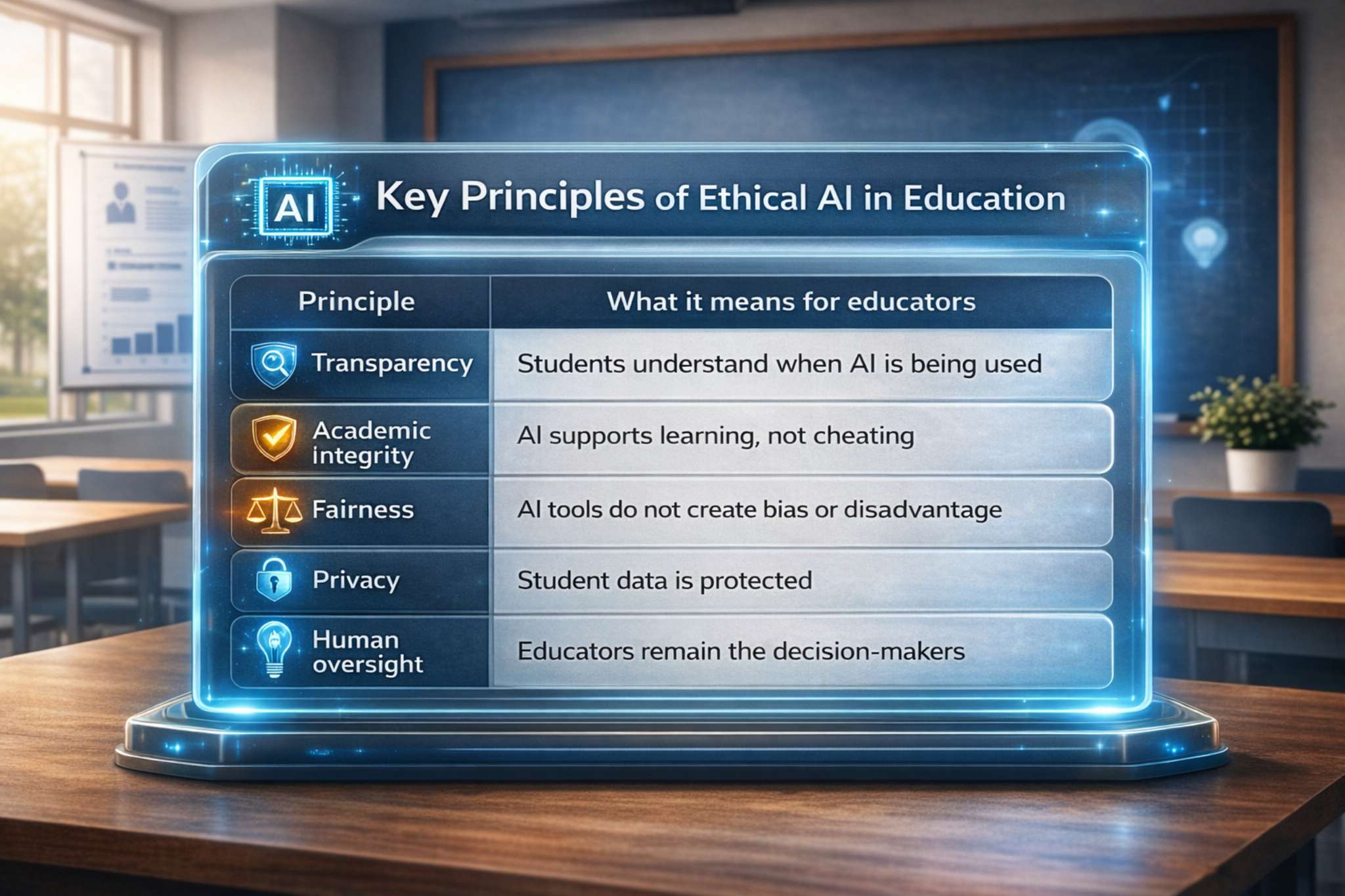

The ethics of AI in education refers to the principles and practices that ensure artificial intelligence is used responsibly, fairly, and transparently in learning environments.

In simple terms, ethical AI in education focuses on five key principles:

In other words:

AI should enhance learning, not replace it.

And it should empower students, not shortcut their thinking.

For educators teaching entrepreneurship or business strategy, ethical AI education becomes even more powerful when students can apply these principles in real scenarios. Platforms like Startup Wars allow students to experiment with AI-assisted decision-making while instructors maintain full transparency and academic oversight.

The real ethical challenges educators face

Let’s look at the issues educators are actually dealing with right now.

This is the concern most professors raise first.

If AI can write essays, how do we know the work is original?

The answer isn’t simple, but it’s clear.

Traditional assessment models were built for a pre-AI world.

Long essays and take-home assignments are now easy for AI to assist with.

That means educators must rethink assessment strategies:

Better approaches include:

- oral defenses of ideas

- project-based learning

- iterative drafts

- real-world simulations

- collaborative problem solving

These methods make thinking visible, not just final outputs.

And that’s where learning actually happens.

Many AI tools collect data, sometimes more than educators realize.

Universities must ask:

- Where is student data stored?

- Who owns the outputs generated by AI?

- Are student interactions used to train models?

Without clear policies, institutions risk exposing student information or intellectual property.

For educators, this means choosing approved AI tools and avoiding platforms that require sensitive student data.

AI models are trained on massive datasets.

But datasets reflect the world, including its biases.

This means AI tools can unintentionally:

- reinforce stereotypes

- produce inaccurate information

- disadvantage certain groups of students

For educators teaching entrepreneurship or business strategy, this is actually a valuable teaching moment.

Students must learn that:

AI outputs are suggestions, not facts.

Critical thinking becomes even more important.

This is one reason many business schools are shifting toward experiential learning environments, where academic integrity becomes visible through real work rather than static assignments. Simulation platforms like Startup Wars help instructors evaluate how students think, collaborate, and make strategic decisions, not just what they submit on paper.

The mindset shift universities must make

Many institutions respond to AI with a simple rule:

“Students cannot use AI.”

The problem?

This rarely works.

Students will still use AI, just secretly.

Instead of banning AI, universities need a mindset shift.

Ethical AI education focuses on transparency and learning.

Students should be encouraged to disclose when AI helped them.

For example:

“This report used ChatGPT to brainstorm initial market research questions.”

That simple sentence turns AI from cheating into a learning tool.

It also mirrors real workplace practices.

Because in modern companies, professionals frequently collaborate with AI tools.

AI literacy is quickly becoming a career skill.

According to multiple workforce studies, companies increasingly expect employees to understand:

- prompt engineering

- AI-assisted research

- automation workflows

- AI-driven decision tools

For business schools, this means entrepreneurship education must evolve.

Students must learn how to build companies in an AI world.

Not just memorize theories.

In practice, this means giving students a safe environment to experiment with AI responsibly. Entrepreneurship simulations like Startup Wars mirror the real startup ecosystem, allowing students to test AI-assisted strategies while instructors still evaluate critical thinking and ethical decision-making.

A practical framework for ethical AI use in universities

Universities that successfully integrate AI typically apply a three-level framework.

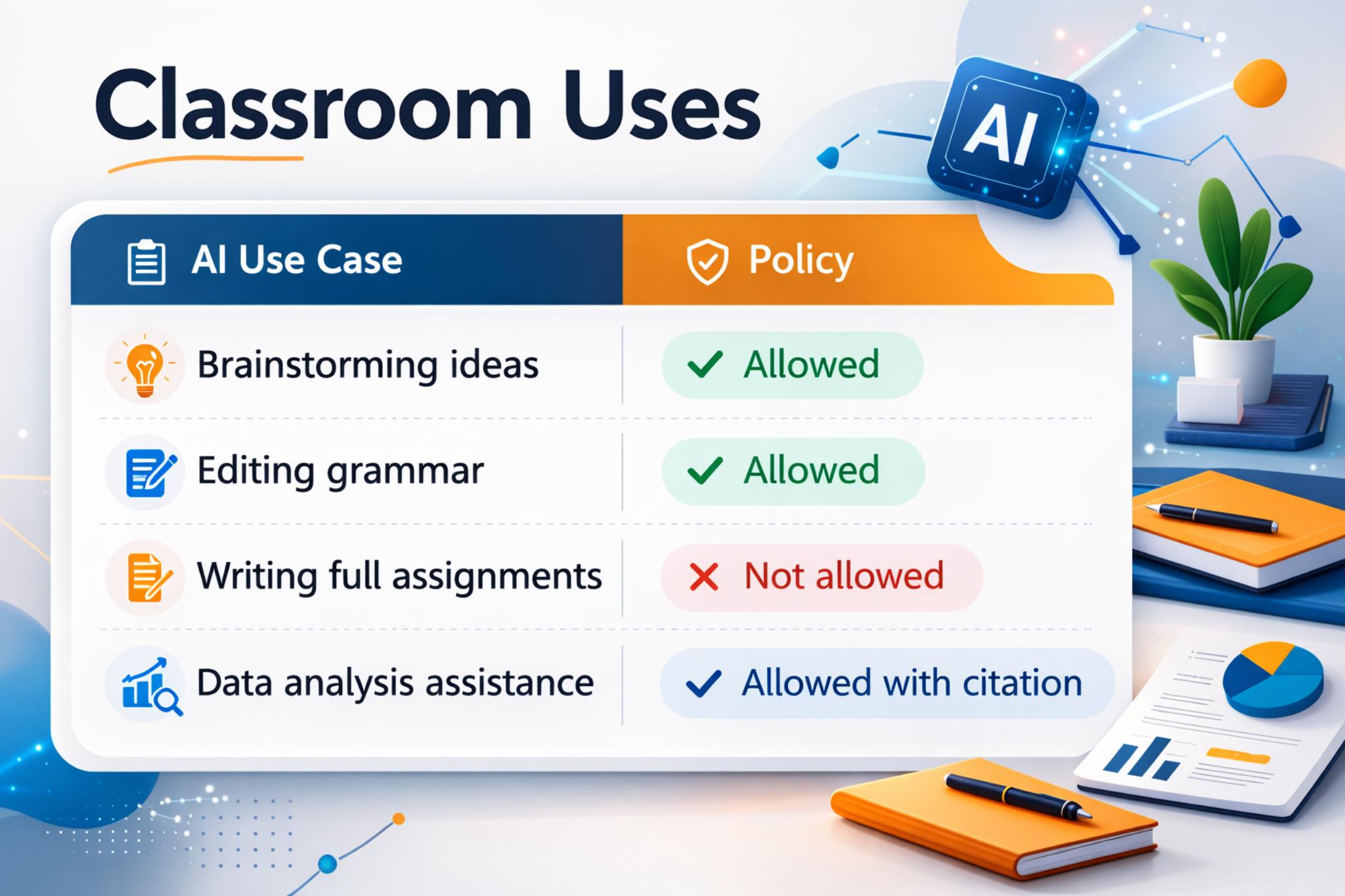

At the course level, instructors define how AI can be used.

Example policy:

Transparency is key.

Students should always declare AI assistance.

Universities should establish clear policies across departments.

These include:

- approved AI tools

- academic integrity guidelines

- student disclosure rules

- faculty training

Without institutional alignment, policies become inconsistent and confusing.

Institutions should evaluate educational tools carefully.

Key questions include:

- Is student data protected?

- Does the tool reinforce critical thinking?

- Does it encourage authentic learning?

Tools should support pedagogy, not replace it.

Tools that support experiential learning are particularly effective within this framework. For example, platforms like Startup Wars allow instructors to set clear rules about AI use while students apply AI to real startup challenges such as market research, financial modeling, and product strategy.

Building an AI policy for your business school

Creating an AI ethics policy doesn’t need to be complicated.

A simple policy includes five sections:

1. Purpose

Why the institution uses AI tools in education.

2. Acceptable AI use

Define what students can use AI for.

Examples:

- research assistance

- brainstorming

- data analysis

3. Prohibited AI use

Examples include:

- submitting AI-generated work as original

- automated exam completion

- falsifying research outputs

4. Disclosure requirement

Students must disclose AI usage.

Example:

“AI tools assisted in drafting this report.”

5. Faculty oversight

Educators maintain final evaluation authority.

AI should never replace human judgment.

When paired with experiential learning platforms like Startup Wars, AI policies become easier to enforce because instructors can observe how students apply AI tools during startup simulations rather than relying solely on written assignments.

Why experiential learning matters more in the AI era

Here’s the paradox of AI in education:

The more powerful AI becomes…

The more important human learning experiences become.

Students don’t just need knowledge.

They need:

- decision making

- collaboration

- entrepreneurship

- creativity

- problem solving

And these skills cannot be outsourced to AI.

That’s why many business schools are moving toward simulation-based learning.

Instead of lectures about startups, students build startups.

Instead of theory about market competition, students experience it.

Platforms like Startup Wars enable universities to create immersive entrepreneurial simulations where students:

- launch startup concepts

- compete in market scenarios

- test strategies

- collaborate in teams

These experiences naturally reinforce academic integrity.

Because learning happens through action, not just written assignments.

And yes, students can use AI tools responsibly within these simulations.

Just like real founders do.

Startup Wars was designed specifically for modern business education, where students must learn entrepreneurship, technology, and ethical decision-making simultaneously.

Conclusion: Ethics is not about restricting AI, it's about guiding it

AI is not the end of education.

But it is the end of traditional teaching models.

The ethics of AI in education isn’t about banning technology.

It’s about ensuring that:

- students learn responsibly

- universities protect fairness and privacy

- educators remain central to the learning process

Most importantly, it’s about preparing students for a future where AI is everywhere.

And the best way to do that?

Give them environments where they can experiment, build, fail, and learn.

That’s exactly why forward-thinking universities are adopting experiential entrepreneurship platforms like Startup Wars.

Students don’t just study innovation.

They practice it.

📅 Schedule a Free Demo to see how Startup Wars transforms business education and prepares students for the AI-powered economy.

Frequently Asked Questions

1. What is the ethics of AI in education?

2. How do we ensure academic integrity in the age of generative AI?

3. Should universities ban AI tools like ChatGPT?

4. What are the biggest ethical risks of AI in education?

5. How can business schools teach ethical AI use?

Let's Empower The Next Generation Of Founders

Schedule a call with our team to explore how Startup Wars can fit into your classroom.